Nelder-Mead method is a derivatives-free numerical minimization (maximization) algorithm that is popular among practitioners. In today’s post I will introduce the algorithm, briefly discuss ways it can be modified to suit various optimization problems and implement a variation of the algorithm in VBA.

The Algorithm:

Since Nelder-Mead (NM) is a derivatives-free algorithm it can be applied to optimization problems for which the objective function f (x) is not differentiable. As long as we can evaluate f (x) at any point x we can apply the algorithm. The downside is that NM is a heuristic technique which means that it uses a practical search method that is not guaranteed to converge to the optimal solution.

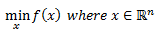

In this section I will discuss the steps of the algorithm. Let’s define the problem as:

This simply means that we are trying to minimize some multivariate function f () that has n dimensional input vector x and where each variable in x is continuous. I will later discuss ways in which we can modify the algorithm to handle problems where some variables in x are discrete or are bounded.

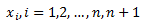

A quick side note, NM is often referred to as Nelder-Mead Simplex, Downhill Simplex, or Amoeba algorithm. This is because at any point in time we are working with n+1 points (ie one point more than the dimension of the problem). Each point that is joined by a line (or edge) is called a vertex. When we plot these points they form a simplex. In one dimension a simplex is a line, in two dimensions a triangle, and in three dimensions it is a tetrahedron.

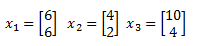

I will use an example in two dimensional space so that we can visually inspect the geometry of the algorithm. We begin by selecting some n+1 points (three points in this example) to form an initial simplex (triangle). Our vertices are(remember that each x is of dimension n):

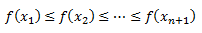

We sort these vertices in increasing order of the objective function values:

We then compute a centroid which is the mean over all the vertices excluding the worst (omit vertex that has the highest objective function value). For our example I will use the following data:

I do not specify an objective function but since we assume the data has been ordered f (x_1) achieves the minimum at this stage.

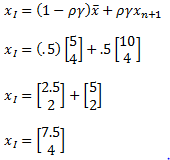

Our centroid can be calculated as:

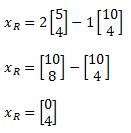

After we have our centroid we compute a reflection of the worst vertex:

Usually rho is set to 1, so we get:

Below is a plot of our initial simplex in grey and the new simplex formed by our reflection point (highlighted in blue).

Label the new point x_r. We now evaluate our objective function at this new point. If the objective function at point x_r, f(x_r), is lower than our current minimum at x_1 then we compute an expansion. This just means we continue in the same direction to see if we can improve on x_r.

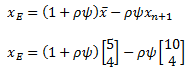

Expansion is given as:

Chi is usually set to 2, using that we have:

Below is a plot of the new simplex. Expansion is highlighted in red. I kept the reflection point so you can see the geometry.

We can now evaluate our objective function at point x_e. If we further improved on f(x_r), ie the objective function at x_e is lower than at x_r, then we remove the worst vertex x_n+1 and replace it with x_e. If f(x_e) is not an improvement on f (x_r) then we replace the worst vertex with x_r.

So far we have taken care of the case where at the very least our reflection operation improved our solution. Now let’s consider what can be done if f(x_r) was not an improvement on the current minimum objective function value f(x_1). We can have a situation where f( x_r) is worse than f(x_1) but is better than the second worst f(x_n). In this case we still improved on the worst vertex so we would accept x_r and replace x_n+1 with it.

Now what if f(x_r) is an improvement on the worst vertex but is not better than the second worse vertex. In this case NM considers a contraction outward.

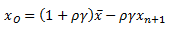

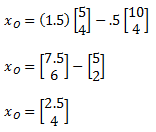

Out-Contraction is given as:

Parameter gamma is usually assigned a value of .5, so we end up with:

We are basically dampening the reflection operation to see if we moved too far initially.

If our new objective function value at point x_o is less than f(x_r) then we accept this change and replace the worst vertex x_n+1 with x_0. Below is a plot of the contraction in red and can be compared to the reflection point.

If out contraction did not improve on reflection then we will shrink the simplex. Shrinking the simplex means we move all vertices closer to the best vertex x_1. Shrink formula is below:

Sigma is typically set equal to .5. Therefore, for x_2 we get:

And for x_3:

Below is a plot of our original simplex and the new shrunk points that are joined by a purple edge.

In other cases we consider a contraction inward. The formula is given below:

You can see that in-contraction is a move of the worst vertex toward the centroid. Below plot includes out-contraction in red and in-contraction in green.

Finally, if at this stage we have not improved on the worst vertex we again shrink the simplex.

At this point I probably have lost most of you. For those who are still with me I created a flowchart of the algo below. Its a busy chart but I think its worthwhile to spend some time inspecting it. The blue rectangles are points in the algorithm where we have to evaluate the objective function. The green circles is where we swap out the worst vertex for some improvement. Finally, the red rectangles are our test conditions where we search for an improvement of the initial simplex.

Modifications:

The NM algorithm has a few drawbacks besides the fact that it is a heuristic approach. The first issue is that the input vector is continuous. In many cases we want to search in a bounded space. For an example in finance, some SABR or Heston model parameters are bounded, therefore we don’t want to search all the parameter space when we are calibrating the models. In Heston and SABR we have parameter rho as an input. Rho captures spot-vol correlation in the model and should be in range (-1,1). An example of search in a bounded space in the field of machine learning is when we are using NM to tune a hyper-parameter of our model. For example, when calibrating a support vector machine we may want to find an optimal Cost parameter that minimizes some cross validation error. Our tuning parameter must be greater than zero. So C should be in range (0,inf).

One way to deal with this is by filtering our objective function. We can assign a large value to f(x) when any x is outside of our bounded search space. So in the case of SABR or Heston, when NM calculates a reflection and takes the value of Rho outside of the bounded space we set the value of the objective function to some high value. This will force the algorithm to perform a contraction and keep us in the search space we desire. This may work but will increase the number of iterations it takes for the algorithm to converge, especially if the minimum is close to the boundary value of Rho.

A more preferred way to deal with the issue is to map our constrained optimization problem to an unconstrained one. For example, If Rho needs to be in range (-1,1) we can amend our objective function to be a function of some variable x where:

So our minimization problem becomes:

We can simply take the inverse of the tanh function to get the value of x.

For boundaries that deal with range (0,inf), like our Cost parameter in case of SVM we can use:

To get back our x value we can take the natural log of Cost.

Another problem that we can often encounter is that our variable x is discrete not continuous. Again let’s take an example from machine learning. Some hyper-parameters are discrete. For instance, the number of nodes or hidden layers in a neural network must be discrete. One possible solution to this problem is to round x to the nearest integer value after every change we make to the simplex.

There are other issues with NM such as collapse of a simplex, premature convergence on local (not global) minimum, failure to converge (stuck in a plateau). There have been many modifications suggested to deal with these issues. Thing like multiple restarts with different starting simplexes and approximation to gradients around the simplex to infer more information about the objective function have been tried and work well in many fields.

VBA implementation:

Since I cannot upload vba enabled workbooks I am attaching a macro-free workbook and the code is at the end of the post.

- Open Excel workbook hit Alt+F11 to open VB Editor. Right click on ThisWorkbook and Insert Module. NelderMead_DownhillSimplex

- Highlight, Copy & Paste the code at the end of this post in its entirety into the Module.

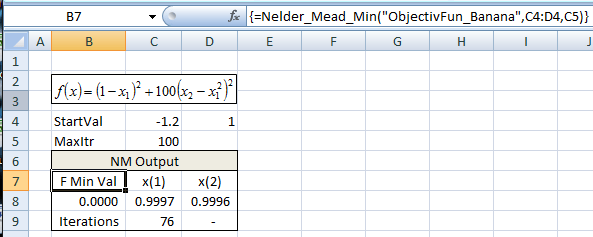

In the workbook I have an example of a where we try to minimize the banana function using starting values (-1.2,1). Formula Nelder_Mead_Min is in range B7:D9. This is an array formula so you need to hit Ctrl_Shift_Enter. After 76 iterations we converge on an answer that is close to the true min of (1,1)

Useful Resources:

- VBA Developer’s Handbook is an excellent book. It was the source for the bubble sort algo that I modified http://ca.wiley.com/WileyCDA/WileyTitle/productCd-078215333X.html

- Wikipedia entry for Bubble Sort Algo https://en.wikipedia.org/wiki/Bubble_sort

- Rosenbrock Function: https://en.wikipedia.org/wiki/Rosenbrock_function#An_example_of_optimization

- Interesting list of optimization test functions http://www.sfu.ca/~ssurjano/optimization.html and https://arxiv.org/pdf/1308.4008.pdf

- Scilab (Open source numerical computation software) has an excellent piece on Nelder-Mead “Nelder-Mead User’s Manual” by Michael Baudin https://wiki.scilab.org/The%20Nelder-Mead%20Component?action=AttachFile&do=view&target=neldermead.pdf

- Excellent book “Option Pricing Models and Volatility” that includes VBA implementation of Heston model that uses Nelder Mead for calibration http://ca.wiley.com/WileyCDA/WileyTitle/productCd-0471794643.html

- Christopher Brooks has a short and interesting paper on the NM algo https://www.cs.usfca.edu/~brooks/papers/amoeba.pdf

Copy Code Starting Below:

Option Explicit

Option Base 1

Function ObjectivFun_Banana(ArgIn)

Dim x1 As Double: x1 = ArgIn(1)

Dim x2 As Double: x2 = ArgIn(2)

ObjectivFun_Banana = (1 - x1) ^ 2 + 100 * (x2 - x1 ^ 2) ^ 2

End Function

Public Function Nelder_Mead_Min(function_name As String, function_parameters As Variant, Optional MaxIterations As Integer = 150)

'Factors for reflection, expansion, contraction, and shrinkage

Dim rho As Double: rho = 1

Dim chi As Double: chi = 2

Dim gamma As Double: gamma = 0.5

Dim sigma As Double: sigma = 0.5

'xxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxx’

'x Variables that will hold function values for

'x reflection, expansion, and both contraction points

'xxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxx’

Dim ReflectionValue As Double: Dim ExpansionValue As Double

Dim OutContractionValue As Double: Dim InContractionValue As Double

Dim ShrinkFlag As Boolean

Dim ParamCount As Integer: ParamCount = CInt(function_parameters.Cells.Count) 'dimension of Fun

'xxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxx’

'x Arrays that will hold new vertices

'xxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxx’

Dim x_bar() As Double: ReDim x_bar(ParamCount)

Dim x_r() As Double: ReDim x_r(ParamCount)

Dim x_e() As Double: ReDim x_e(ParamCount)

Dim x_o() As Double: ReDim x_o(ParamCount)

Dim x_i() As Double: ReDim x_i(ParamCount)

Dim ii As Integer: ii = 1 ' counter

Dim jj As Integer: jj = 1 ' counter

Dim Temp_x_vec() As Double: ReDim Temp_x_vec(ParamCount)

'xxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxx’

'x creates a ParamCount+1 x ParamCount+1 matrix called Simplex_Matrix

'x 1st column is function value while remaining columns are the vertecise

'x page 20 https://wiki.scilab.org/The%20Nelder

'x -Mead%20Component?action=AttachFile&do=view&target=neldermead.pdf

'xxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxx

Dim Simplex_Matrix() As Double: ReDim Simplex_Matrix(ParamCount + 1, ParamCount + 1)

Dim delta_u As Double: delta_u = 0.05

Dim delta_z As Double: delta_z = 0.0075

For ii = 1 To (ParamCount + 1)

For jj = 1 To ParamCount

If ii = 1 Then ' first row uses supplied starting values

Simplex_Matrix(ii, jj + 1) = function_parameters(jj)

ElseIf (jj = ii - 1) And (function_parameters(jj) <> 0) Then

Simplex_Matrix(ii, jj + 1) = function_parameters(jj) * (1 + delta_u)

ElseIf (jj = ii - 1) And (function_parameters(jj) = 0) Then

Simplex_Matrix(ii, jj + 1) = delta_z

Else

Simplex_Matrix(ii, jj + 1) = function_parameters(jj)

End If

Temp_x_vec(jj) = Simplex_Matrix(ii, jj + 1) ' temp vector of x to evaluate ObjFun

Next jj

Simplex_Matrix(ii, 1) = Run(function_name, Temp_x_vec) ' eval ObjFun

Next ii

' sort simplex_matrix based on FunObj

Simplex_Matrix = BubbleSort(Simplex_Matrix)

Dim TotalIt As Integer: TotalIt = 1 ' iteration count

Dim Epsilon As Double: Epsilon = 0.000001 ' convergence criteria

' main loop

Do While Abs(Simplex_Matrix(1, 1) - Simplex_Matrix(ParamCount + 1, 1)) > Epsilon And TotalIt < MaxIterations

' compute centroid

For jj = 1 To ParamCount

x_bar(jj) = 0

For ii = 1 To ParamCount ' sum all x excluding best

x_bar(jj) = x_bar(jj) + Simplex_Matrix(ii, jj + 1)

Next ii

x_bar(jj) = x_bar(jj) / ParamCount ' compute average

Next jj

For jj = 1 To ParamCount ' compute reflection x_r

x_r(jj) = (1 + rho) * x_bar(jj) - rho * Simplex_Matrix(ParamCount + 1, jj + 1)

Next jj

ReflectionValue = Run(function_name, x_r) ' compute f(x_r)

If ReflectionValue < Simplex_Matrix(1, 1) Then ' if f(x_r)<f(x_1) then

For jj = 1 To ParamCount ' compute expansion x_e

x_e(jj) = (1 + rho * chi) * x_bar(jj) - rho * chi * Simplex_Matrix(ParamCount + 1, jj + 1)

Next jj

ExpansionValue = Run(function_name, x_e) ' compute f(x_e)

If ExpansionValue < ReflectionValue Then ' if f(x_e)<f(x_r) then

For jj = 1 To ParamCount 'replace x_n+1 with x_e

Simplex_Matrix(ParamCount + 1, jj + 1) = x_e(jj)

Next jj

Simplex_Matrix(ParamCount + 1, 1) = ExpansionValue 'replace F(x_n+1) with F(x_e)

Else

For jj = 1 To ParamCount 'replace x_n+1 with x_r

Simplex_Matrix(ParamCount + 1, jj + 1) = x_r(jj)

Next jj

Simplex_Matrix(ParamCount + 1, 1) = ReflectionValue 'replace F(x_n+1) with F(x_r)

End If

ElseIf Simplex_Matrix(1, 1) < ReflectionValue And ReflectionValue < Simplex_Matrix(ParamCount, 1) Then ' else if f(x_1)<f(x_r)<f(x_n) then

For jj = 1 To ParamCount 'replace x_n+1 with x_r

Simplex_Matrix(ParamCount + 1, jj + 1) = x_r(jj)

Next jj

Simplex_Matrix(ParamCount + 1, 1) = ReflectionValue 'replace F(x_n+1) with F(x_r)

ElseIf Simplex_Matrix(ParamCount, 1) < ReflectionValue And ReflectionValue < Simplex_Matrix(ParamCount + 1, 1) Then ' else if f(x_n)<f(x_r)<f(x_n+1) then

For jj = 1 To ParamCount ' compute outside contraction x_0

x_o(jj) = (1 + rho * gamma) * x_bar(jj) - rho * gamma * Simplex_Matrix(ParamCount + 1, jj + 1)

Next jj

OutContractionValue = Run(function_name, x_o) ' compute f(x_o)

If OutContractionValue < ReflectionValue Then ' if f(x_o)<f(x_r) then

For jj = 1 To ParamCount 'replace x_n+1 with x_

Simplex_Matrix(ParamCount + 1, jj + 1) = x_o(jj)

Next jj

Simplex_Matrix(ParamCount + 1, 1) = OutContractionValue 'replace F(x_n+1) with F(x_o)

Else

ShrinkFlag = True ' compute shrinkage later

End If

Else

For jj = 1 To ParamCount ' compute inside contraction x_i

x_i(jj) = (1 - gamma) * x_bar(jj) + gamma * Simplex_Matrix(ParamCount + 1, jj + 1)

Next jj

InContractionValue = Run(function_name, x_i) ' compute f(x_i)

If InContractionValue < Simplex_Matrix(ParamCount + 1, 1) Then ' if f(x_i)<f(x_n+1) then

For jj = 1 To ParamCount 'replace x_n+1 with x_i

Simplex_Matrix(ParamCount + 1, jj + 1) = x_i(jj)

Next jj

Simplex_Matrix(ParamCount + 1, 1) = InContractionValue 'replace F(x_n+1) with F(x_i)

Else

ShrinkFlag = True ' compute shrinkage later

End If

End If

If ShrinkFlag = True Then

For ii = 1 To ParamCount ' compute shrinkage

For jj = 1 To ParamCount

Temp_x_vec(jj) = Simplex_Matrix(1, jj + 1) + sigma * (Simplex_Matrix(ii, jj + 1) - Simplex_Matrix(1, jj + 1))

Simplex_Matrix(ii, jj + 1) = Temp_x_vec(jj)

Next jj

Simplex_Matrix(ii, 1) = Run(function_name, Temp_x_vec) ' compute f(x_shrink)

Next ii

ShrinkFlag = False

End If

Simplex_Matrix = BubbleSort(Simplex_Matrix)

TotalIt = TotalIt + 1

Loop

Dim output() As Variant: ReDim output(3, ParamCount + 1)

output(1, 1) = "F Min Val"

output(2, 1) = Simplex_Matrix(1, 1)

For ii = 1 To ParamCount

output(1, ii + 1) = "x(" & ii & ")"

output(2, ii + 1) = Simplex_Matrix(2, ii + 1)

Next ii

output(3, 1) = "Iterations"

output(3, 2) = TotalIt

Nelder_Mead_Min = output

End Function

Function BubbleSort(ArgIn As Variant)

'x Adapted from VBA Developers GudIe with amendment to sort a matrix instead of a vector

Dim Target_Mat As Variant: Target_Mat = ArgIn

Dim RowCount As Integer: RowCount = CInt(UBound(Target_Mat, 1))

Dim ColCount As Integer: ColCount = CInt(UBound(Target_Mat, 2))

Dim SortedFlag As Boolean

Dim ii As Integer

Dim jj As Integer

Dim bb As Integer

Dim Temp() As Double

ReDim Temp(ColCount) As Double

ii = 0

Do While (ii < RowCount) And Not SortedFlag

SortedFlag = True

ii = ii + 1

For jj = 1 To RowCount - ii

If Target_Mat(jj, 1) > Target_Mat(jj + 1, 1) Then

For bb = 1 To ColCount

Temp(bb) = Target_Mat(jj + 1, bb)

Target_Mat(jj + 1, bb) = Target_Mat(jj, bb)

Target_Mat(jj, bb) = Temp(bb)

Next bb

SortedFlag = False

End If

Next jj

Loop

BubbleSort = Target_Mat

End Function

A very simple and important improvement of the Nelder-Mead algorithm is to keep restarting it from the last solution obtained, until no improvement is obtained to a given accuracy (10^-4 or other as preferred).

This will dramatically increase its performance (at of course additional running time) on difficult problems, non-smooth, non-convex, non-linear (both in terms of the objective or constraint function). In practice this ‘restarted nelder-mead’ allways has very strong convergence to local optimality (but without theoretical convergence guarantees, for those, I recommend looking at the works of Charles Audet, MADS in particular).

See for instance https://nl.mathworks.com/matlabcentral/fileexchange/33328-improving-the-convergence-of-nelder-mead–and-so-fminsearch- for more on this topic, or relevant sections of http://hdl.handle.net/2078.1/114822

LikeLike

Hi, i put the code in workbook but gives erro, please upload the file with code working, plese. thank you.

LikeLike

Hello,

Just saw your comments now (the website only sent me the notification now the was a message). For the code you can look at the code example I give in the Mathworks link, if you read that code you will quickly see how to implement a restarting nelder-mead.

LikeLike

please share nelder mead thesis

LikeLike

Hi Nadia, I don’t have their thesis. Their reference article is a very well known paper from 1965! Nelder, John A.; R. Mead (1965). “A simplex method for function minimization”. Computer Journal. 7: 308–313. doi:10.1093/comjnl/7.4.308.

Just look for example at the wikipedia page as a start for more details: https://en.wikipedia.org/wiki/Nelder%E2%80%93Mead_method

LikeLike

Thank you for the explanation and for the VBA code!. In used the VBA code and it works well. I also tried it on the “Himmelsblau” function which has 4 local minima: https://en.wikipedia.org/wiki/Nelder%E2%80%93Mead_method

The code always finds the nearest local minimum.

This proofs that the code works on smooth functions. I am now going use the VBA code to minimize a discontinuous function that Excel’s Solver can not tackle.

LikeLike